"What if a trustworthy AI could help you see media bias and understand the full spectrum of news perspectives?"

Ana: AI News Aggregator

Empowering users with balanced news perspectives and highlighting media bias

Overview

In a media environment shaped by disinformation and polarization, Ana redefines news engagement through explainable AI and media literacy.

Final Solution

Ana: An AI-powered news aggregator that detects bias, educates users, and breaks information bubbles through transparent analysis and interactive exploration.

Article Bias Analysis

Comprehensive bias analysis workflow for any article or link

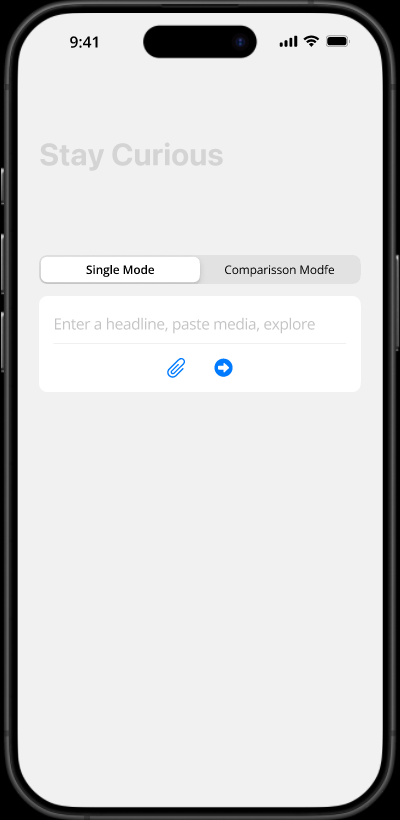

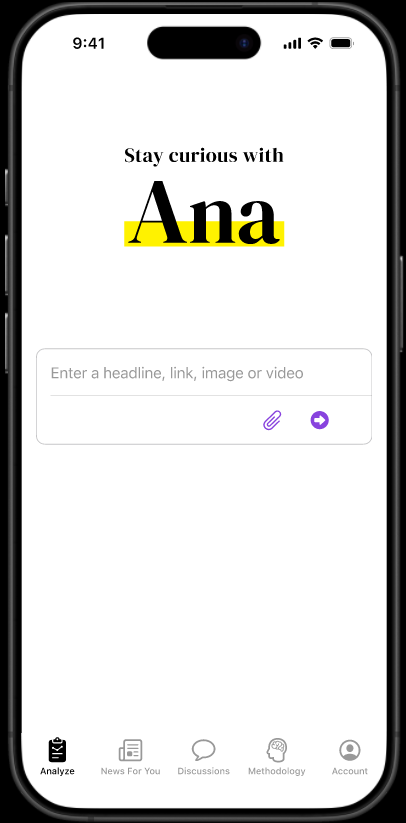

Start on Homepage

User enters any article link or text to be analyzed for bias

Submit for Analysis

Click the arrow to send request to Ana's analysis engine

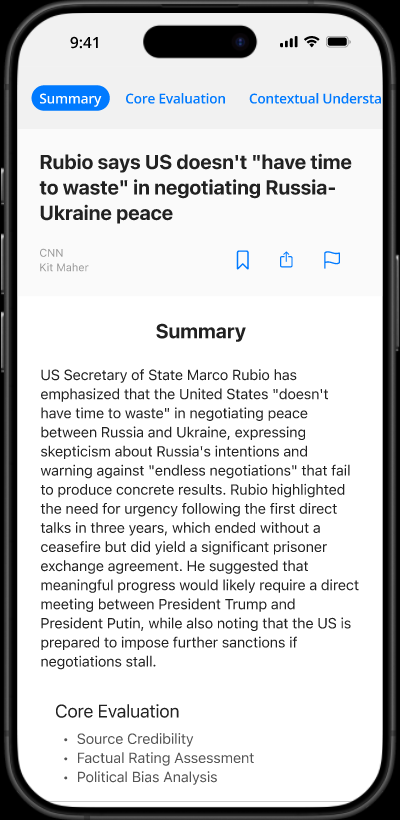

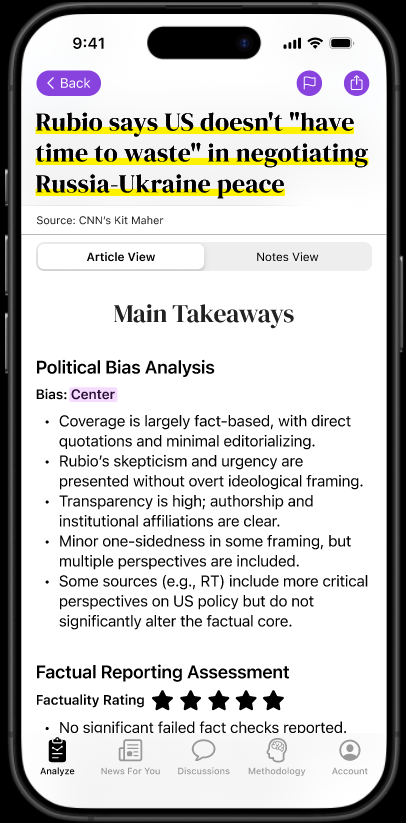

Comprehensive Bias Report

Receive detailed analysis with main takeaways and insights

Political Bias Analysis

View bias highlighted with color coding throughout the content

Factual Reporting & Source Credibility

Assess accuracy and reliability of the source

Deeper Insights & Sharing

Explore "The Bigger Picture" and "About the Source" sections, share reports with friends

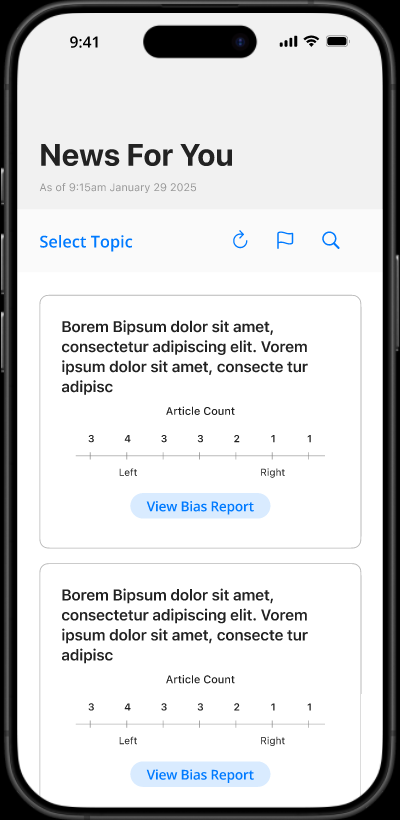

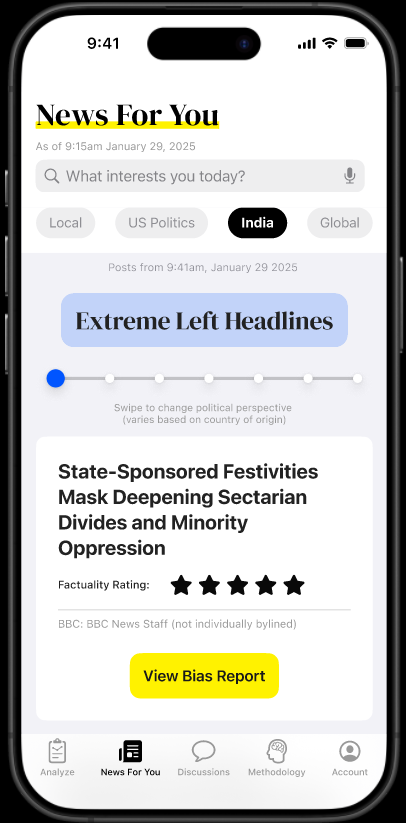

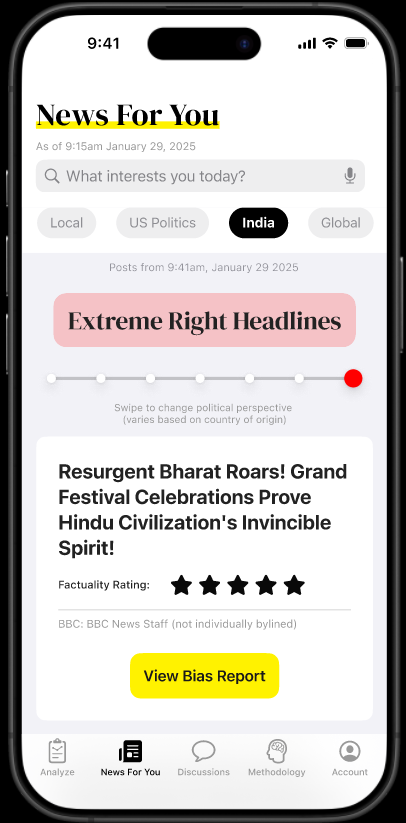

News For You Page

Personalized news discovery with bias transparency

Start with Empty State

Clean interface with search bar for topic discovery

Search or Browse Topics

Enter any topic or choose from personalized recommendations

Preference-Based Results

See articles curated based on onboarding preferences

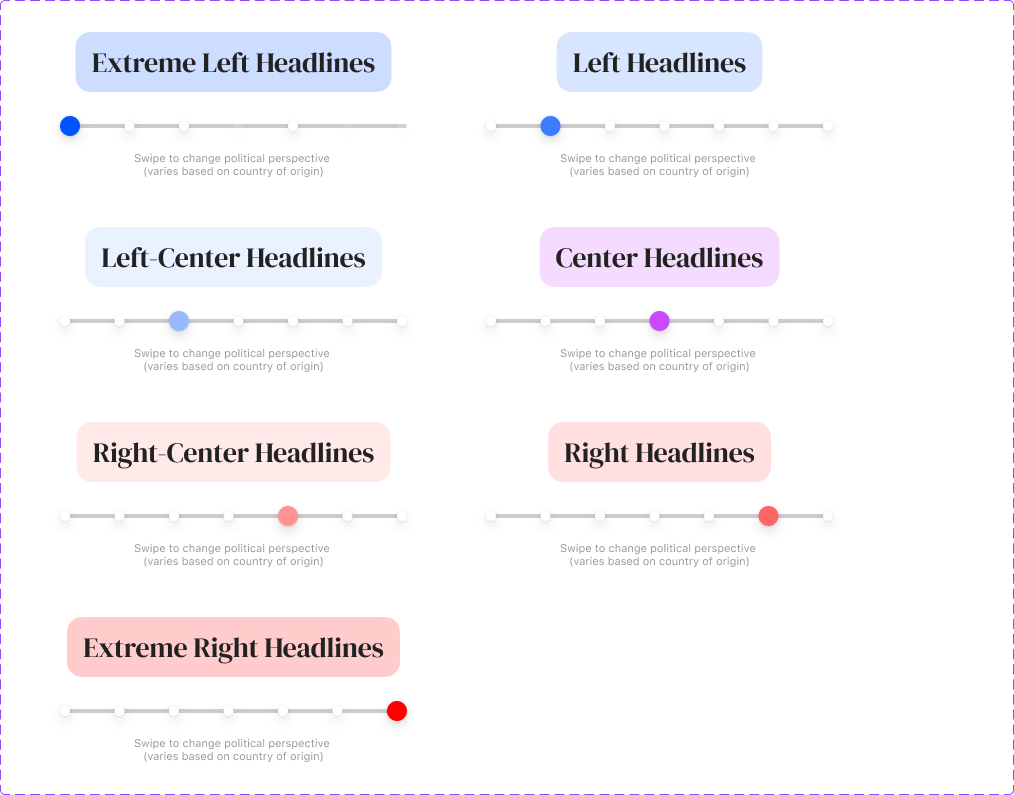

Browse by Bias Perspective

Choose to explore articles with specific political biases

Detailed Bias Reports

Access comprehensive bias analysis for any article of interest

Introduction

Our team developed Ana, an AI-powered news aggregator that detects and flags bias in news articles. Biased sources often dominate the information landscape due to speed and reach, leaving users to manually discern bias—a cognitively demanding task, especially for younger audiences. Ana addresses this through automated, explainable bias detection, educational tools, and interactive features that empower users to access balanced information and break out of information bubbles.

The Problem

Gen Z users (18-28) are trapped in algorithmic information bubbles. Biased news dominates due to speed and reach, while users struggle with manually discerning subtle framing, selective facts, and linguistic patterns that shape information perception.

Our Approach

Competitive Analysis

We analyzed Ground News, AllSides, and TIMINO. While competitors provide varied perspectives, Ana differentiates through micro-lessons in media literacy, user-reporting mechanisms, and a bias slider for exploring the ideological spectrum—embedded in a Gen Z-optimized mobile interface.

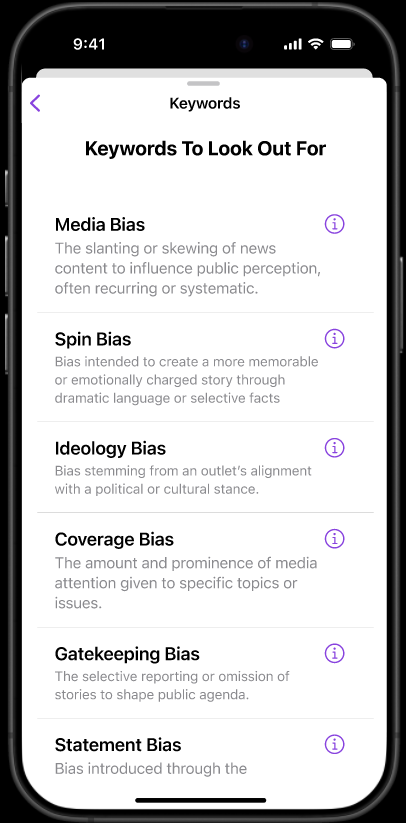

Literature Review

Synthesizing 63 studies revealed that media bias is often subtle and context-dependent, with current AI systems still in early development. We identified 17 distinct bias forms—from spin and ideology to gatekeeping bias—which shaped Ana's bias taxonomy and training examples.

Psychological frameworks for trustworthy AI emphasize transparency, context-awareness, and accountability, influencing Ana's visual explanations, bias-source links, and user-controlled feedback loops.

Design Principles

Three core principles—transparency, education, and empowerment—guide Ana from onboarding tutorials explaining bias detection to bias sliders inviting exploration of contrasting narratives. Ana is both a news filter and literacy tool designed to meet users' cognitive, emotional, and social needs.

Transparency

Clear explanations of how bias is detected and analyzed

Education

Embedded media literacy tools and micro-lessons

Empowerment

Interactive features for exploring diverse perspectives

Goals

Research validated user concerns about mainstream and AI-generated news, revealing deep skepticism and misinformation fears. Our refined goals focused on:

Reading Habits & Preferences

Understand user news consumption patterns and topic preferences, confirming interest in political, social, and environmental news.

AI Prompt Development

Refined scope to iterate on focused prompts for one model (ChatGPT) based on research cautioning against uncontrolled outputs.

User vs. AI Bias Perception

Revealed users and AI often differ in bias interpretation, particularly with subtle framing, informing explainable bias visualizations.

Media Literacy Enhancement

Added micro-lessons and onboarding tutorials to enhance literacy without overwhelming users due to strong demand for contextual learning.

By combining evidence-based design with human-centered AI, Ana redefines how users engage with the news. It is not just a content delivery system but a cognitive aid built to detect bias, illuminate nuance, and cultivate critical thinking in a media landscape that urgently demands it.

Research Methods

A multi-stage, mixed-methods research approach grounding every design decision in evidence.

Our multi-stage, mixed-methods approach combined qualitative exploration with quantitative validation, followed by systematic AI development and iterative user testing.

Competitive Analysis

Analysis of Ground News, AllSides, and TIMINO revealed opportunities for differentiation through AI integration and educational features:

Ground News

Offers tools to track media bias and compare coverage across sources. Features a bias checker, bias distribution visuals, and AI-generated summaries. Available via desktop, mobile app, website, and Chrome extension.

Strengths: Strong multi-platform presence, visual bias distribution

Gaps: Limited educational content

AllSides

Emphasizes presenting diverse political and social perspectives. Includes a media bias vote and human-written articles to highlight contrasting viewpoints. Available on desktop and mobile.

Strengths: Community-driven bias assessment, diverse perspectives

Gaps: Lacks AI summaries and in-depth bias comparison tools

TIMINO

Provides bias insights through a checker and comparison features. Relies on human-written content but does not support AI summaries or article reporting.

Strengths: Focused bias detection tools

Gaps: No AI summaries, limited interactive features

Key Opportunity Identified:

Ana integrates micro-lessons in media literacy, user-reporting mechanisms for misinformation, and a bias slider for exploring articles across the ideological spectrum—features not comprehensively integrated in any existing platform.

Literature Review Findings

Key Human Tendencies in Information Consumption

Naive Realism

Leads individuals to view their interpretations as objective truths, causing them to dismiss opposing perspectives as biased or misinformed. This hinders balanced news consumption.

Confirmation Bias

Drives people to seek information that aligns with their existing beliefs. In digital spaces, this is amplified by algorithm-driven "information bubbles" which restrict exposure to diverse viewpoints.

Media Bias Complexity

We identified 17 types of media bias—spin, ideology, omission, placement, labeling, source selection—detectable by experts but largely undetectable by current AI systems in early development stages.

This gap led us to explore prompt engineering and trust-building between humans and AI content.

Building Trustworthy AI

Research on trustworthy AI identified three critical components for building user trust:

The Trustor (User)

User's background—age, education, job, culture, and experience with AI—impacts their relationship with AI systems.

The Trustee (AI System)

An AI system that is accountable, focuses on privacy protection with proper safeguards, and is transparent increases user trust.

Interactive Context

The context of AI impacts perceived trust based on how well it is viewed by media and society.

User Research

User Interviews with Card Sorting

Nine semi-structured interviews explored political views, bias perceptions, and news habits. Participants completed card sorting for concepts like "source credibility" and "political bias," informing Ana's information architecture. Results organized topics into: Core Evaluation, Contextual Analysis, and Explore More.

Qualtrics Survey (n=43)

Comprehensive survey analyzed using R revealed key insights from US residents aged 20-34:

Demographics & Usage

- • Total participants: 43 (US residents)

- • Age range: 20-34 (avg: 26.7)

- • Political leaning: 68% left-leaning

- • AI news aggregator usage: 82% never used

Key Insights

- • Market education potential: High unfamiliarity with AI news tools

- • Full story priority: Users want depth and multiple perspectives

- • Political perspective impact: System must account for users' own biases

- • Generalization limits: Young, politically homogeneous sample

Limitation: The sample's left-leaning political composition (68%) and modest size (43 responses) may have impacted perceptions, with participants potentially having difficulty recognizing bias aligned with their own views.

Data Analysis

Affinity Diagrams

Each team member coded interviews using inductive coding method, with findings aggregated into a comprehensive affinity diagram in FigJam to identify key themes.

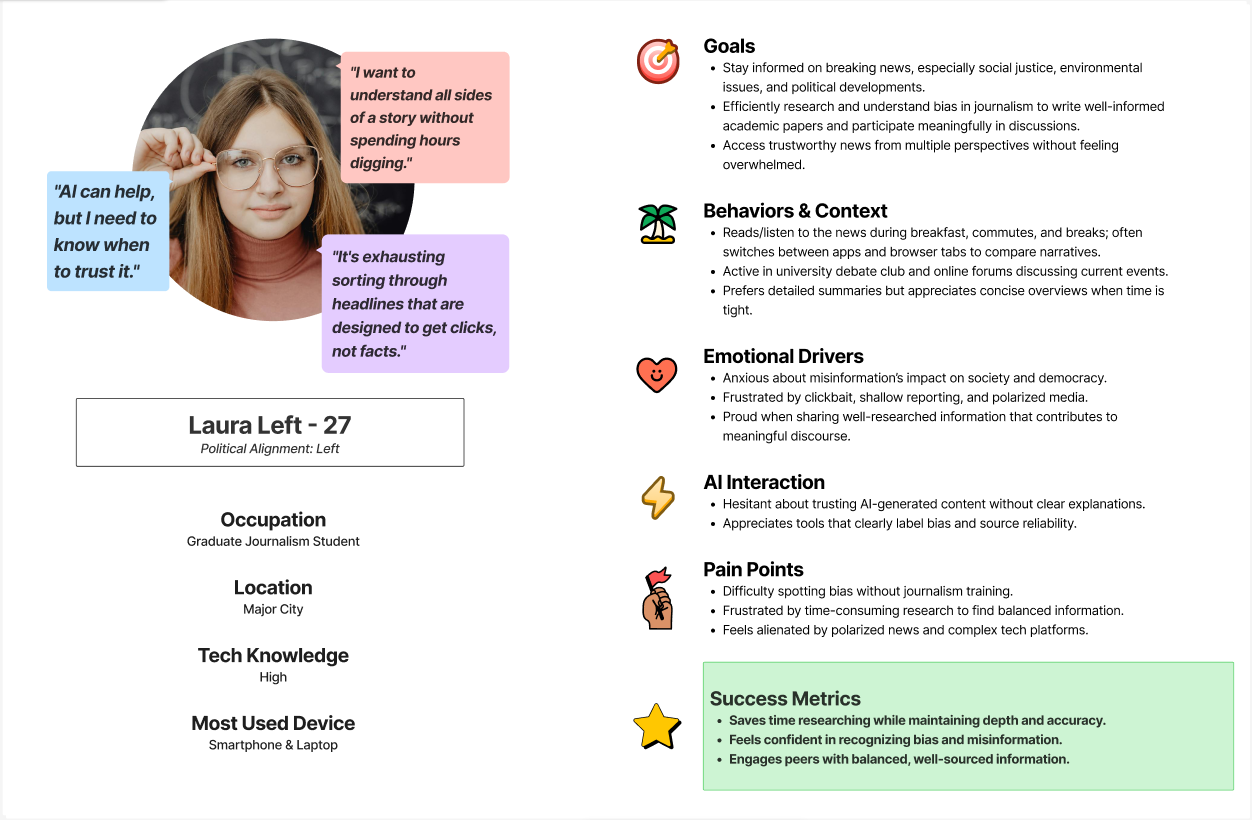

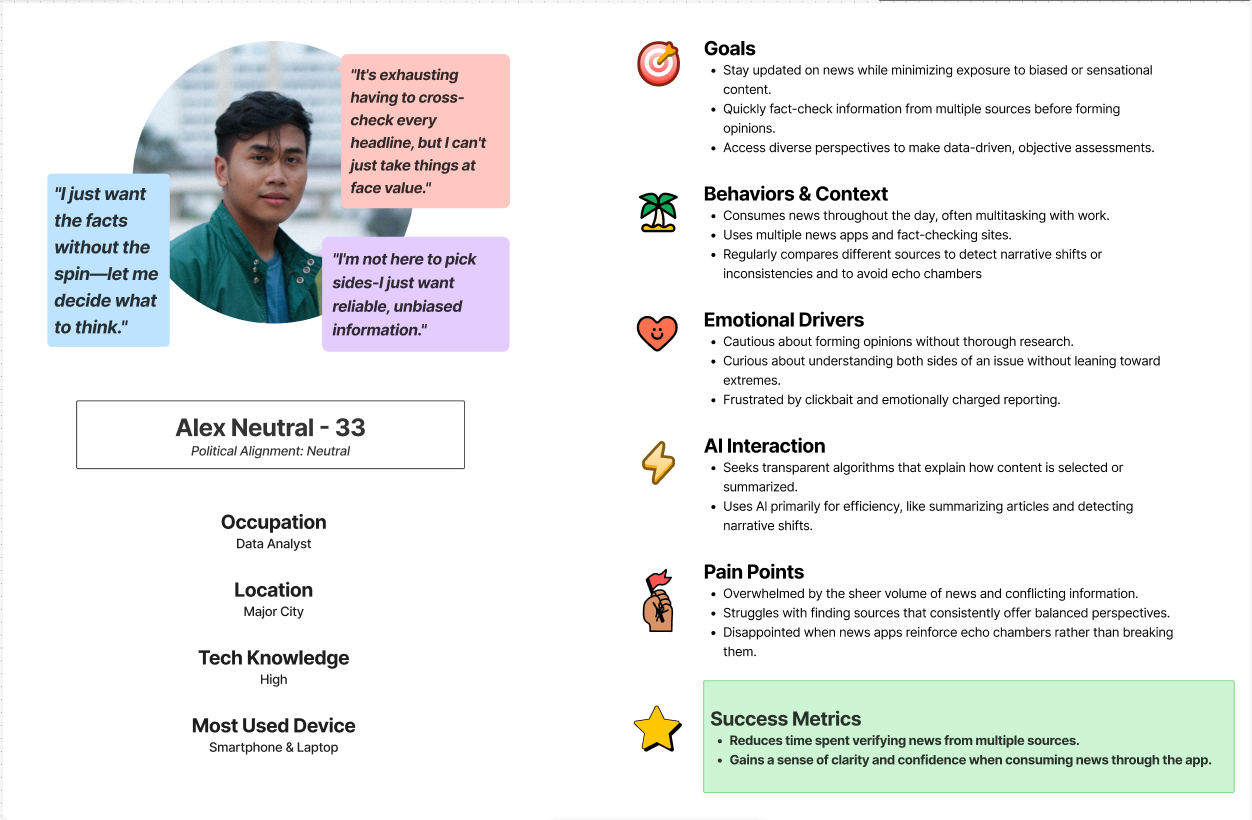

User Personas

Insights distilled into three distinct user personas: "Laura Left" and "Alex Neutral," capturing diverse goals, behaviors, and pain points.

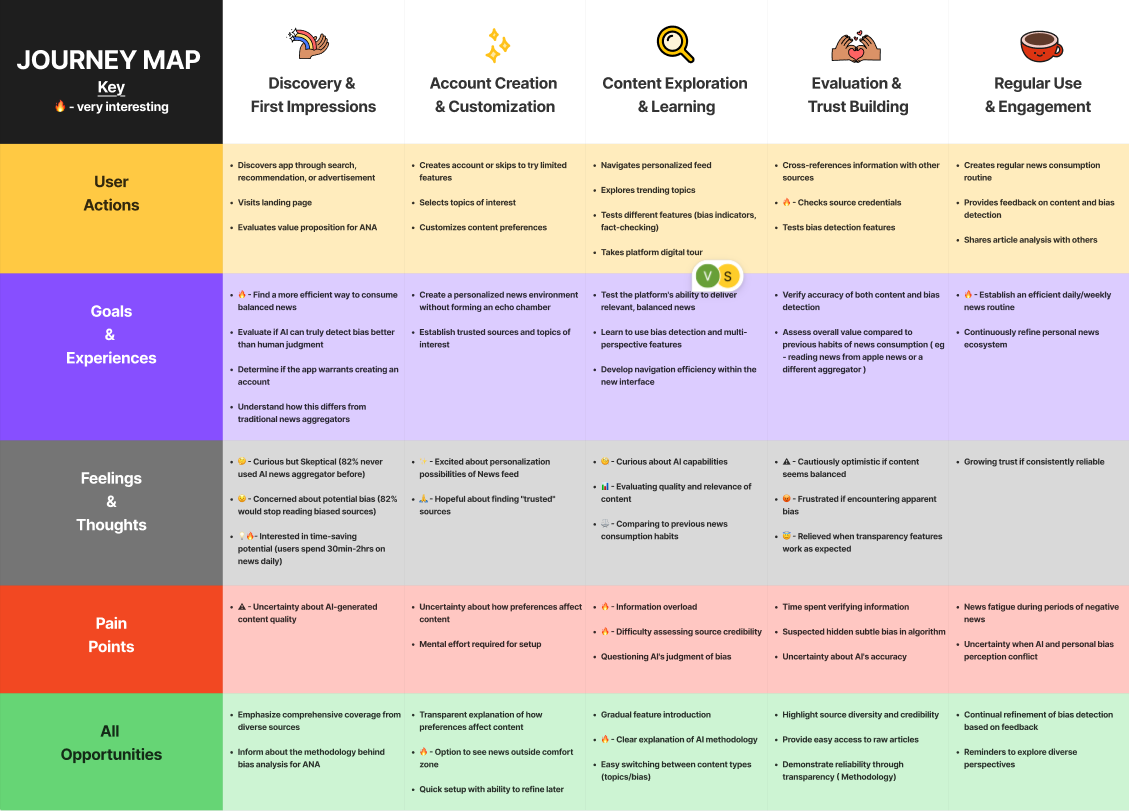

User Journey Map

Visualized typical user path from discovering Ana to building trust, highlighting "Evaluation & Trust Building" as the most critical stage.

The User's Dilemma

Interviews revealed engaged yet weary news consumers describing misinformation as a "dangerous virus." Users felt overwhelmed by "misinformation and exaggeration" requiring mental health breaks from news.

This skepticism extends to both content and platforms. Users perform laborious verification rituals: cross-referencing sources, checking comments for dissent, seeking journalistic distance. AI enters as both potential "Socratic method machine" and feared source of additional bias.

Survey Insights: Bias Detection Challenges

Our headline analysis revealed significant "blind spots" in how users perceive bias, validating Ana's core premise:

Subtle Framing

46.2% misidentified a right-center headline ("Trump needs unity...") as "Least Biased," showing users miss bias embedded in seemingly neutral language.

Subject vs. Perspective

66.7% misidentified a left-leaning headline critical of GOP as right-leaning, confusing the article's subject with its critical perspective.

Overt Bias

51.3% accurately identified headlines with overt, emotionally charged language, showing better recognition of obvious bias.

Creating Custom Research-Based GPT for Ana

We developed a rule-based analytical framework ensuring Ana's analysis is transparent and defensible, grounded in information science and media analysis rather than arbitrary "black box" outputs.

Source Credibility Assessment

We adapted the "5 Ws" method, a standard framework used by academic institutions like the University of Washington Libraries to evaluate source reliability.

- • Author credentials assessment

- • Publisher reputation evaluation

- • Content purpose analysis

- • Sourcing practices review

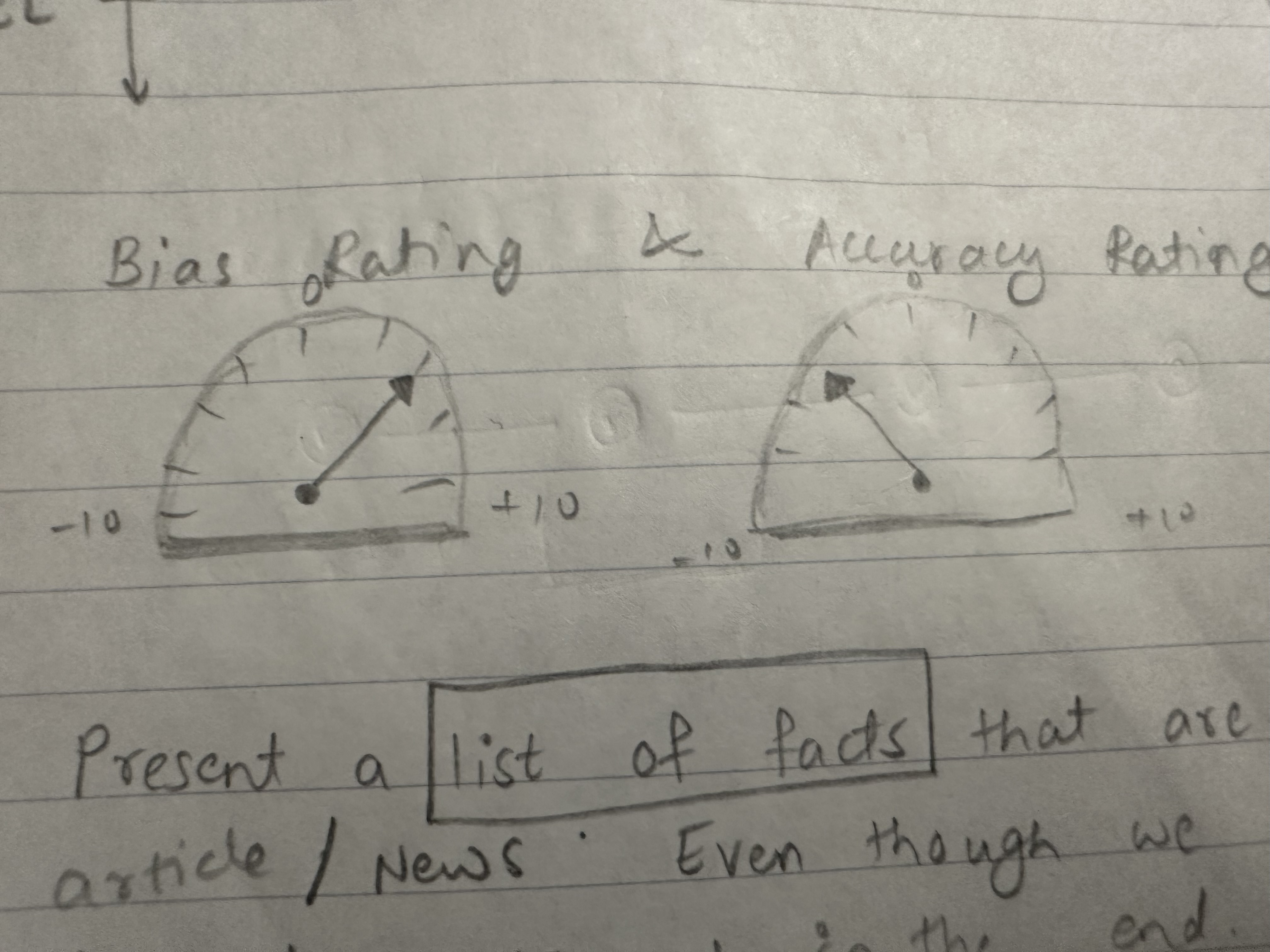

Political Bias Scoring

We operationalized the methodology of Media Bias/Fact Check (MBFC), adopting their weighted scoring system across multiple dimensions.

- • Economic systems evaluation

- • Social values assessment

- • Editorial bias patterns

- • Seven-point spectrum placement

Bias Check Validation & User-AI Perception Gap

To validate Ana's bias detection accuracy without introducing system bias, we examined the user-AI perception gap through focused testing.

Media Bias Detection Sprint

In-class "Media Bias Detection Sprint" had classmates manually analyze headlines for bias types (spin, statement bias) and compare findings to Ana's AI output.

Human Analysis Patterns

Participants were often swayed by emotional language (e.g., the word "bloodbath"), focusing on surface-level emotional triggers rather than systematic bias patterns.

AI Analysis Strengths

The AI could more systematically identify and categorize bias types, providing consistent methodology across different content samples.

Outcome: This experiment reinforced the necessity of a methodical, rule-based tool to augment human intuition and successfully validated Goal 4 (Compare user vs. AI perceptions of bias).

Iterative Design & Testing

From concept to prototype: How user feedback and peer evaluation shaped Ana's evolution through structured design iterations.

Our design process moved from low-fidelity concepts to refined mid-fidelity prototype through structured, iterative feedback and testing cycles.

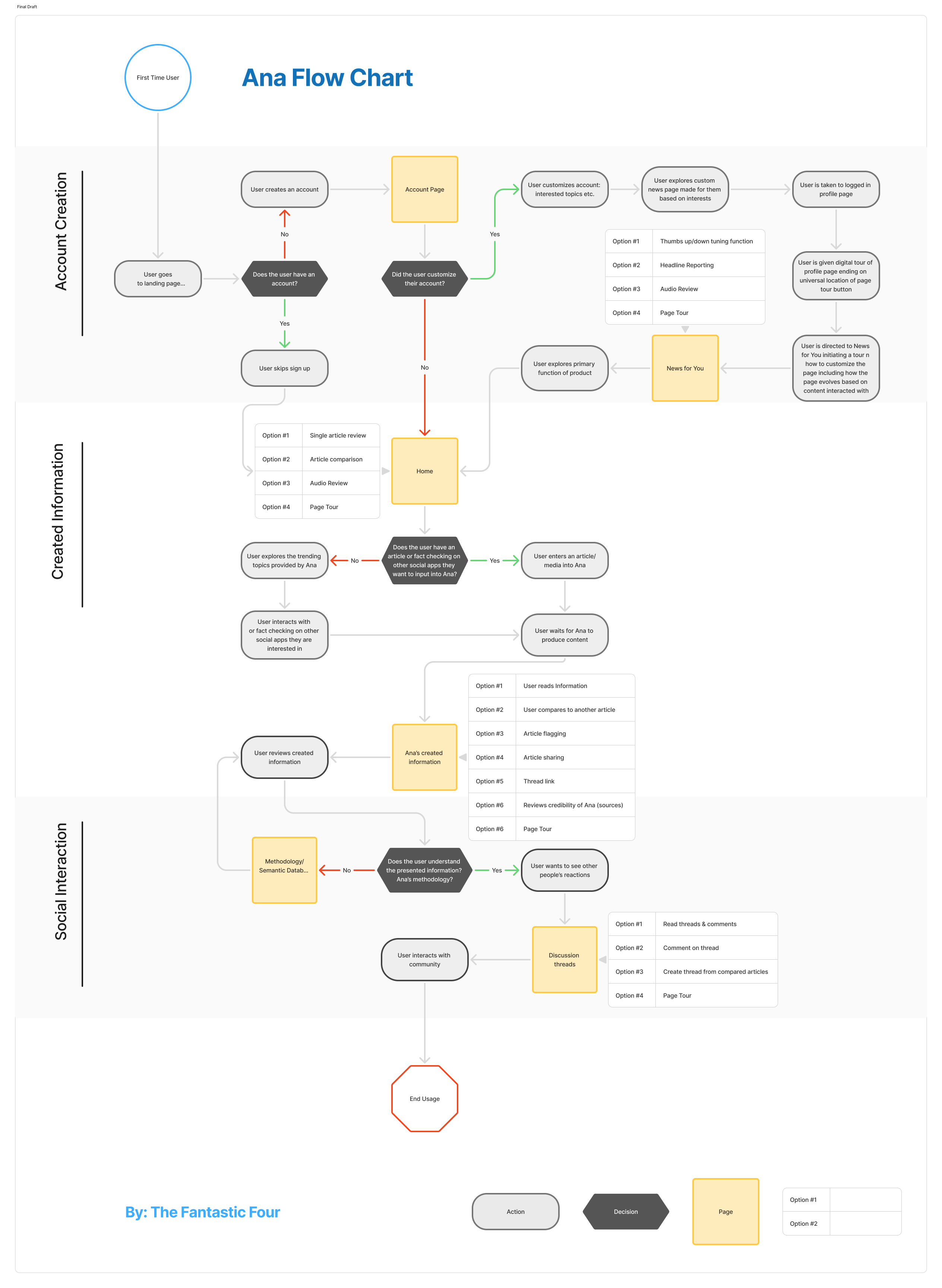

Early Stages & User Flow Mapping

We created a user flow chart of various tasks and functionality to define Ana's MVP, grounding our decision-making and identifying the most important aspects to design and test.

The most important flows identified included:

Lo-Fi Prototyping

Ana's primary function is providing article political bias information. We kicked off design with collaborative sketches exploring screen concepts. Figma-proficient teammates then created wireframes, mapping basic user flow and information architecture.

Lo-Fi Home Screen

Initial Layout Concept

Lo-Fi Bias Analysis

Core Feature Wireframe

Lo-Fi News For You

Article Discovery Flow

Peer Evaluation: RFP Exchange with "Her Team"

We conducted an RFP exchange with "Her Team" for expert cognitive walkthrough of our lo-fi prototype. Their evaluation provided external validation and critique that informed design changes.

Strengths Identified

- Core concept was strong and compelling

- Onboarding flow was "smooth and intuitive"

- Successfully provided detailed bias information

Critical Weaknesses

- Inconsistent design and unclear terminology

- Non-functional navigation elements ("Core Evaluation")

- Meaningless visual indicators without context

- "Home" and "News for You" pages were conflated

Impact: This peer feedback was instrumental in validating our decision to pivot to a mid-fidelity design using a standardized iOS component library, which would resolve the inconsistencies in navigation and visual language that were undermining the user experience.

Design Pivot to Mid-Fi

A major hurdle was debating micro design decisions easily solved with a component library. We selected iOS components for their mobile popularity, developing a mid-fidelity Figma prototype that resolved lo-fi inconsistencies.

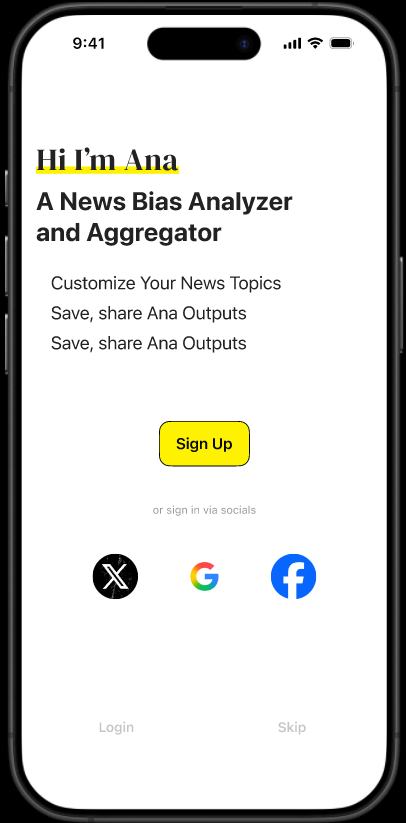

Login Page

User Authentication

Home Page

Main Dashboard

Bias Analysis Report

Detailed Analysis

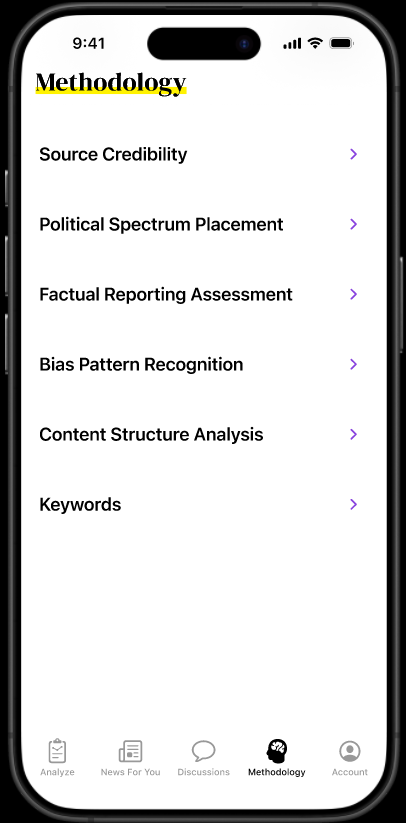

Methodology Page

AI Explanation

News For You - Browse

Article Discovery

News For You - Filters

Bias Filtering

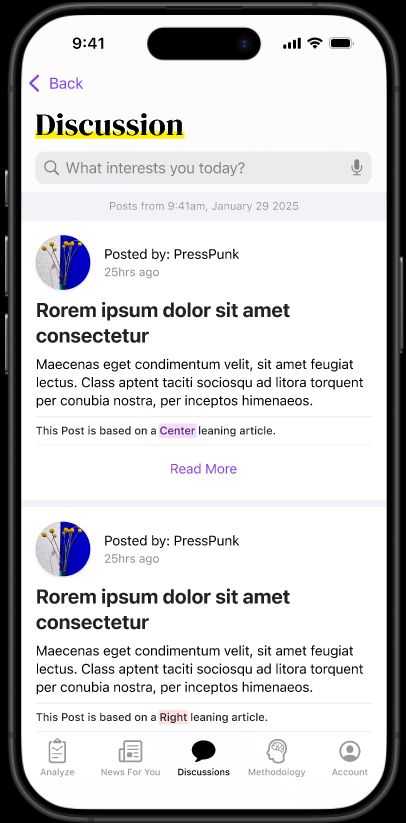

Discussions

Community Features

Methodology Details

Keyword Analysis

Early Branding

Though low priority, we used color in the mid-fi prototype for hierarchy and to prototype the final design aesthetic.

Trustworthy White

Heavy usage of white for trustworthy look/feel, giving space for political expression

Yellow Highlighter

Represents the act of highlighting key information

Purple Interactables

Anomaly color compared to iOS blue to implicate political favorability

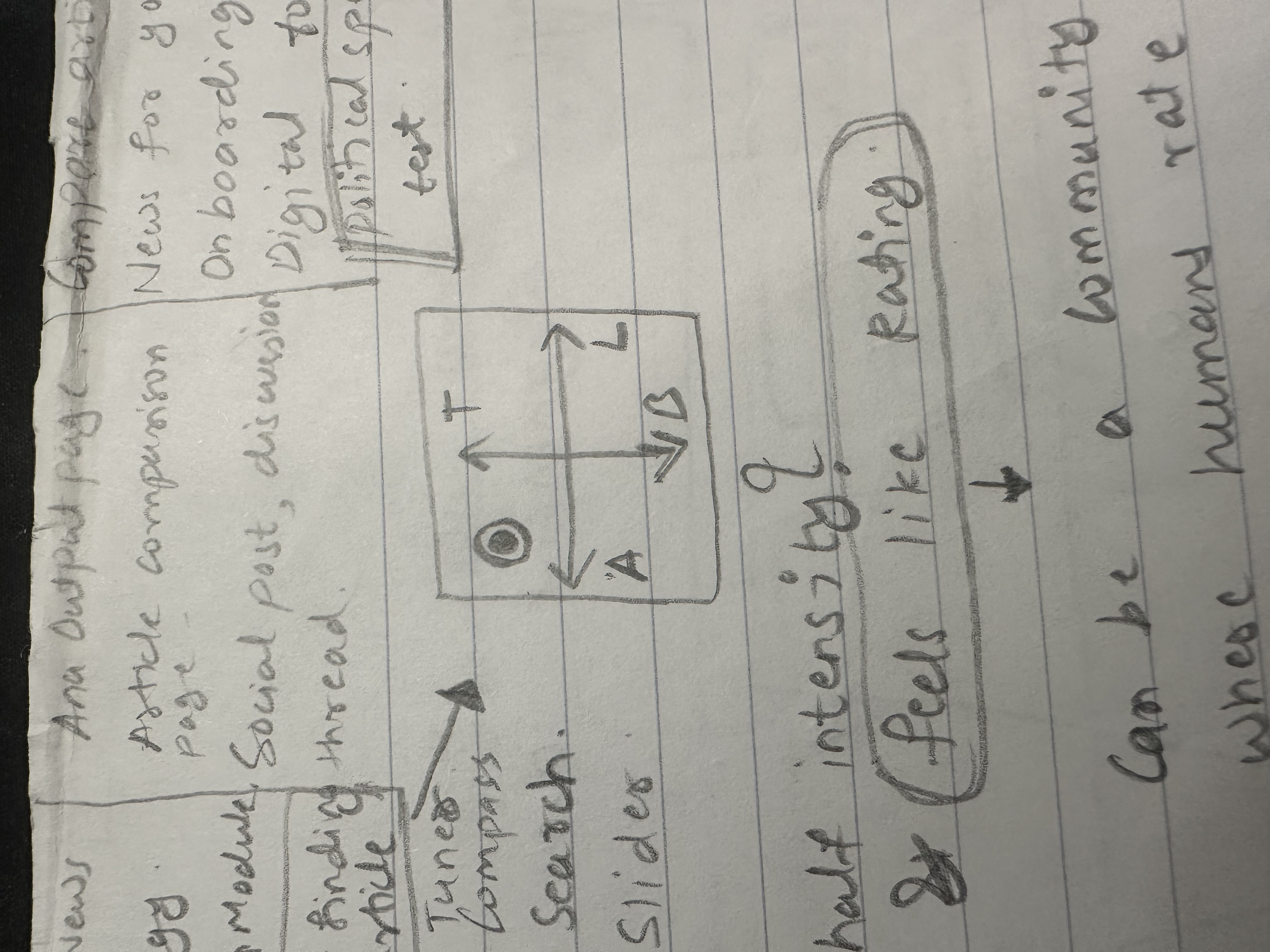

Bias Slider Scale: From Research to Design

The bias slider scale emerged from our literature review of Media Bias Fact Check methodology, where political bias was represented on a linear scale. Through iterative design exploration, we evolved from conceptual sketches to a refined interactive component.

The design process involved exploring various metaphors—from audio tuners and compass designs to speedometer interfaces—before settling on a clean slider that users could intuitively understand and interact with.

Design Evolution

Figma Inspiration

Visual representation idea source

Literature Foundation

Media Bias Fact Check methodology scale

X-Y Axis Exploration

Two-dimensional bias mapping concept

Speedometer Concept

Circular bias indicator exploration

Final Slider Component

Clean, intuitive bias scale interface - primary design solution

Design Insight: The evolution from complex metaphors to a simple slider demonstrated that users preferred familiar interface patterns over novel visualizations when dealing with sensitive political content. The final design prioritized clarity and trustworthiness over visual novelty.

RITE Method: Rapid Iterative Testing & Evaluation

We tested the mid-fidelity prototype using RITE (Rapid Iterative Testing and Evaluation) method—two usability testing rounds with targeted design changes after each session.

This agile approach quickly identified and fixed usability issues, ensuring the final prototype directly responded to user needs while working under pressure.

Design Strengths Confirmed

- Aesthetic and Tone:

Users responded positively to the soft, authoritative color scheme, which established credibility

- Clarity:

Users found the general layout and typography intuitive

Areas for Improvement

- Information Hierarchy:

Users needed to see "Main Takeaways" immediately, but initial design buried this content below the scroll

- Interactive Clarity:

Key interactive elements lacked strong visual cues to show they were clickable

- Screen Real Estate:

Mobile layout used space inefficiently with oversized elements

- Sharing Options:

Users wanted to share specific sections, not just the entire article

Iterative Design Changes

This feedback directly informed our design pivot. The redesign incorporated the following improvements:

- Elevated "Main Takeaways" for immediate visibility

- Collapsed summary to prioritize key points

- Added visual borders to clarify button interactivity

- Implemented prominent flag and share icons

This iterative loop demonstrates how we refined Ana from a strong concept into a functional, intuitive prototype.

Reflection & Next Steps

Looking back on our journey and forward to Ana's potential: What we learned, what we'd do differently, and where we go from here.

Ana represents a promising step toward news tools built on trust and media literacy, combining AI's analytical power with user-centered design to empower critical news understanding.

Project Reflection

What Went Well

- User-Centered Research: Our mixed-methods approach successfully uncovered deep insights about user perception gaps and bias blind spots

- Iterative Design Process: The RITE method and RFP feedback led to significant usability improvements

- AI Framework Development: Successfully created a rule-based, transparent bias detection system

- Educational Integration: Seamlessly combined bias detection with media literacy learning

What Could Be Improved

- Demographic Diversity: Our sample was heavily left-leaning (68%) and limited in age range, potentially skewing insights

- AI Explainability: Current system struggles with nuanced explanations for complex bias cases

- Long-term Validation: Limited testing period for measuring sustained behavior change

- Technical Scalability: Resource-intensive analysis may create latency issues at scale

Project Limitations

While Ana shows promise, several limitations emerged during our research and development process that future iterations should address:

Sample Bias

Our participant pool's political homogeneity and limited age range (20-34) may not represent broader user needs, particularly among conservative users or older demographics who consume news differently.

AI Complexity

The subject/perspective confusion we observed highlights AI's current limitations in understanding nuanced bias, particularly when article subjects differ from editorial perspectives.

Scale Validation

While prototype testing showed promise, real-world deployment with thousands of users and diverse news sources would likely reveal additional challenges and edge cases.

Next Steps

Looking forward, Ana's next steps involve expanding capabilities and validating effectiveness with broader audiences. Future work should focus on three key areas:

Enhancing AI Explainability

Develop AI to provide more nuanced explanations for bias ratings, particularly for complex cases like subject/perspective confusion. This includes:

- Advanced prompt engineering for contextual analysis

- Multi-layered bias detection beyond left/right paradigms

- Visual explanations showing AI reasoning process

Broader Demographic Testing

Conduct studies with more politically and demographically diverse users to ensure Ana's effectiveness for all users. This expansion should include:

- Conservative and moderate political perspectives

- Broader age ranges (35-65+) and cultural backgrounds

- International users with different media landscapes

Building Interactivity

Implement user-requested flagging and feedback mechanisms to create a "human-in-the-loop" system that refines AI assessments over time, building trust and engagement:

- User flagging system for bias detection accuracy

- Community-driven bias validation and appeals process

- Continuous learning algorithms that adapt to user feedback

Final Reflection

Ana is more than a bias detection tool—it's an educational platform helping users develop critical thinking for all media consumption. In today's fragmented information landscape, we need technologies that help us think better, not just tell us what to think.

This project demonstrates that combining rigorous user research with thoughtful AI development can create solutions addressing real user needs while building essential media literacy skills.

— The Fantastic Four Team